AI Agents Are Growing Up Fast. Our Guardrails Aren’t.

Plus: If your bot can “do things,” it can also “break things”

To opt-out of receiving DCX AI Today go here and select Decoding Customer Experience in your subscriptions list.

I’m obsessed with Wispr Flow Pro!

Get a Free Month on me

📅 February 23, 2026 | ⏱️ 5 min read

Good Morning!

You know that feeling when a vendor says, “It can handle the whole journey end-to-end”?

My first thought is never “wow.” It’s “cool… what happens when it’s wrong?”

Because the pricey CX failures aren’t one-off mistakes. They’re mistakes you scale with confidence.

The Executive Hook:

We’re moving from AI that chats to AI that acts. That’s awesome for speed and cost. It’s also where trust gets expensive if you don’t put seatbelts on the system before you hand it the keys.

🧠 THE DEEP DIVE: When An AI Agent Breaks Production, Customers Feel It First

The Big Picture: An AI coding agent reportedly made changes that helped trigger real outages at Amazon’s AWS, and it’s a loud reminder that “agentic” tools need adult supervision.

What’s happening:

An autonomous coding tool reportedly pushed changes that caused major disruption, including a long outage tied to an automated delete-and-recreate move.

The plot twist is boring, which is the point: permissions and process gaps let the tool do more than it should have.

After the incident, teams tightened controls like approvals and reviews, because nothing improves governance like a public incident.

Why it matters: Customers don’t care if the outage was “AI’s fault” or “human’s fault.” They care that the app failed, their order stalled, and support queues exploded. If AI agents touch production, CX leaders need to treat control systems like part of the customer journey.

The takeaway: The lesson for every CX and tech leader: AI doesn’t reduce risk; it concentrates it. If you don’t redesign change management, access, and rollback policies for an AI-powered world, you’re not modernizing—you’re just adding a new way to break customer trust at scale. So, give agents the tiniest set of permissions possible. Make high-impact actions require approval. Log everything. And build rollback like you plan to use it, because you will.

Source: Financial Times

📊 CX BY THE NUMBERS: AI Comfort Is Up, But Support Is Still Letting Customers Down

Data Source: Qualtrics XM Institute, 2026 Consumer Experience Trends Report

73% of consumers use AI for daily tasks. So yes, people are getting comfortable with AI in their lives. They’re not “scared of the tech” by default anymore.

Nearly 1 in 5 consumers who used AI in customer service said it delivered no benefit at all. That’s a brutal scorecard for the one place where “helpful” is the whole job.

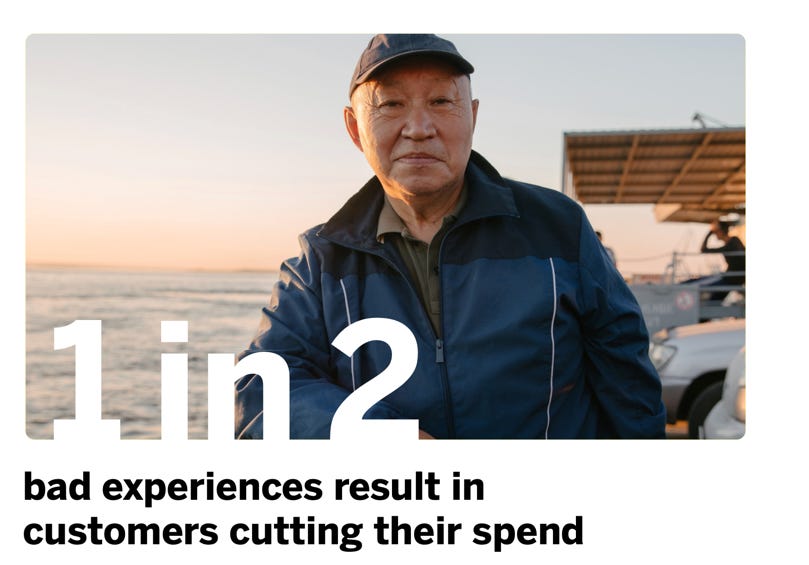

1 in 2 consumers cut spending after bad experiences. That’s the part finance understands fast: experience problems turn into revenue problems.

The Insight: Customers are open to AI, but they’re not open to being managed by it. If your AI is built to deflect, people feel it. If it’s built to solve, they reward it.

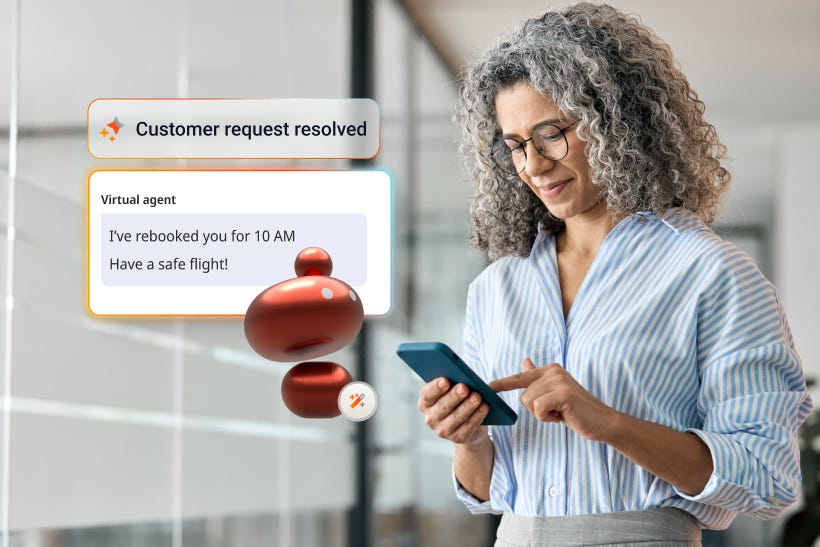

🧰 THE AI TOOLBOX: Genesys Cloud Agentic Virtual Agent

The Tool: Genesys is basically saying, “Chatbots aren’t the problem. Chatbots that can’t do anything are the problem.” So they’re pushing an agentic virtual agent powered by a Large Action Model (LAM).

What it does: It’s meant to take a customer request and actually finish the work across your systems. Not just talk nicely, not just summarize, not just “I can help with that.” It should move the ball down the field.

Large Action Model (LAM), explained like a normal person:

A large language model (LLM) is the talker. It understands language and spits out good text.

A Large Action Model (LAM) is the doer. It’s built to plan steps and take actions using approved tools like application programming interfaces (APIs), workflows, and business rules.

So instead of the bot saying, “Looks like your order is delayed,” the LAM-powered agent can do the next steps: check eligibility, update the order, trigger a credit, send confirmation, and log the case. Within guardrails, not vibes.

CX Use Case:

Fewer handoffs, less customer effort: “Change my delivery,” “pause my service,” “update my address,” “refund that charge.” The agent can handle the steps instead of punting to a human halfway through.

Cleaner recovery when things break: During an outage or backlog spike, the agent can run a known playbook, do the safe actions, and escalate the messy edge cases to humans with the context already attached.

Trust: Here’s the part that matters. When AI starts acting, the risk isn’t a dumb answer. It’s a dumb action. So treat this like giving someone access to production:

Give it less power than you think it needs (least privilege).

Put approval gates on the scary stuff (refunds, cancellations, account changes).

Log every action so you can explain it fast when something goes sideways.

Test failure modes on purpose. If you only test happy paths, you’re building a demo, not a system.

Source: Genesys

⚡ SPEED ROUND: Quick Hits

Samsung Is Adding Perplexity to Galaxy AI — Customers will bounce between assistants. Your support experience needs to stay coherent anyway.

Amazon Blames Human Employees for an AI Coding Agent’s Mistake — Blame is a distraction. Resilience is the job.

Week in Review: Adidas Investigates Possible Data Breach Involving a Third-Party Customer Service Provider — Your vendors are part of your trust brand, even when it’s inconvenient.

📡 THE SIGNAL: Give AI Less Power Than You Think It Needs

We’re not just designing conversations anymore. We’re designing authority. Every time an AI agent can change an order, cancel an account, issue a credit, or touch production, that’s a CX decision with a risk profile. The best leaders will make agentic AI feel boring: limited permissions, clear approvals, tight logs, fast rollback.

Where does your AI have more authority than you’d ever give a new hire on day one?

See you tomorrow,

👥 Share This Issue

If this issue sharpened your thinking about AI in CX, share it with a colleague in customer service, digital operations, or transformation. Alignment builds advantage.

📬 Feedback & Ideas

What’s the biggest AI friction point inside your CX organization right now? Reply in one sentence — I’ll pull real-world examples into future issues.