AI Agents Need Managers Now

Plus: IBM’s live dashboard points to the next CX battleground: oversight, not output

Your daily signal on AI and CX — minus the hype.

📌 DCX Stat of the day: 91% of customer service leaders say they are under pressure to implement AI in 2026. Gartner

In this issue:

→ IBM shows the next control layer

→ Why observability is moving into CX

→ The metric shift hybrid teams will force

→ Basecamp opens its doors to agents

→ Voice bots just rang 3,000 pubs

CONTEXT

The new AI problem is supervision

The first wave of AI was “can we build agents?” The next wave is “who’s watching them?”

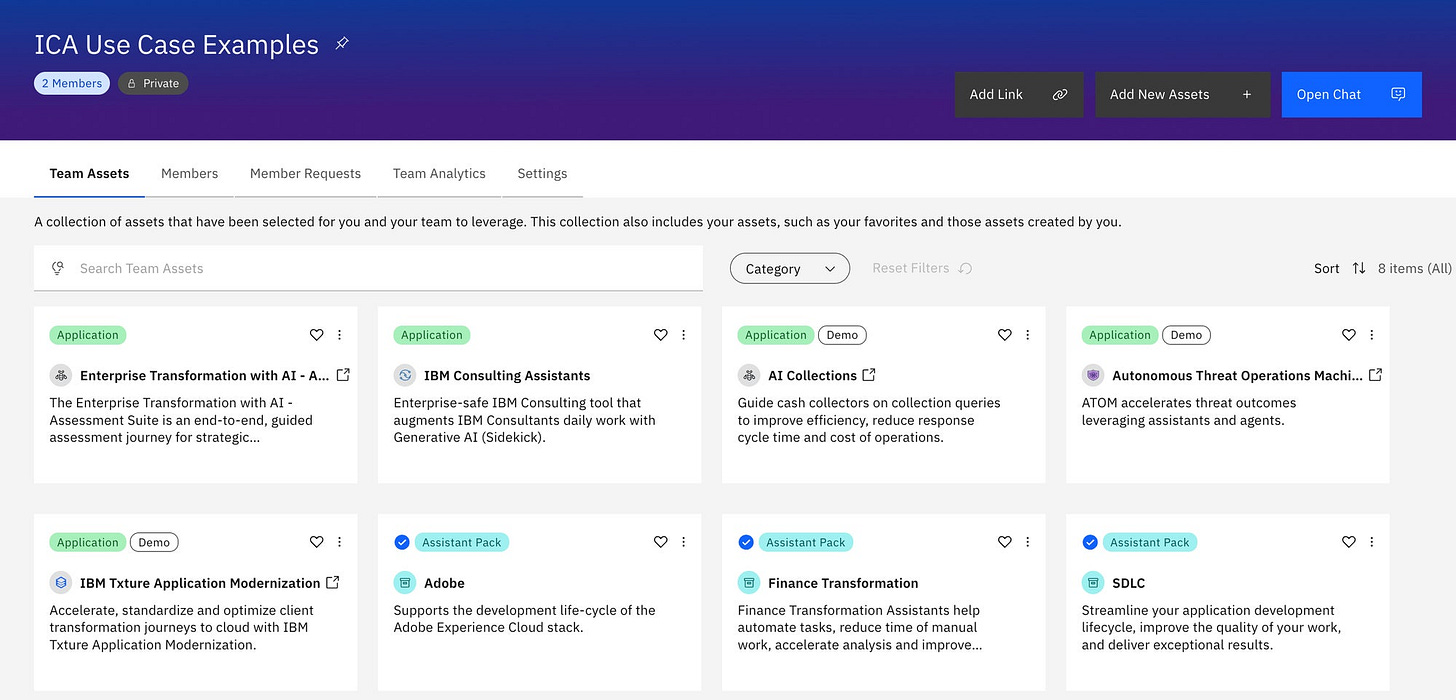

IBM’s live dashboard is an early clue. Its consulting arm now uses AI workers on more than 150 client engagements, and in one January security use case agents completed 52,000 investigations after cutting task time from about 45 minutes to a few minutes.

Once agents are doing that kind of work, the hard part stops being the model demo. It becomes the supervision model around it.

WHY IT MATTERS

CX leaders are about to inherit the same problem in service and digital journeys.

Think about a billing adjustment, a cancellation save, or a fraud flag. If an agent makes those calls without clear visibility and guardrails, you feel it in complaints, chargebacks, and churn.

As agents start handling real customer tasks, the weak point shifts to the control layer: who can see what the agent did, when a human steps in, how decisions get audited, and how fast you can recover when it goes wrong.

IBM’s Enterprise Advantage offer leans heavily on governance, brand control, accuracy, cost, and human validation. That’s a signal: autonomy by itself is no longer an interesting story. Governed autonomy is the story.

EXEC SUMMARY

🎯 Exec Briefing: Why this should be on your agenda

IBM is pushing a real-time operating model where humans monitor digital workers and intervene on important outputs, and its consulting platform already supports hundreds of client engagements. Its Enterprise Advantage service turns that into a packaged way of working for customers.

For CX leaders, that creates a fast tension. More agent autonomy can cut effort and cost. It also increases the number of places a customer can get a wrong answer, a messy handoff, or a decision nobody can clearly explain.

The edge now goes to teams that manage agents like a workforce, not a feature.

📬 Copy-Paste Take: Send this to your COO

AI agents are moving into customer work, which means the next advantage will come from who can see them, govern them, and step in before a bad answer becomes a service problem.

🔎 Deep dive

The control room is becoming the product

The interesting shift isn’t that vendors can spin up agents. It’s that they’re now building the management layer around them.

IBM’s Enterprise Advantage pitch is basically a playbook and control room for digital workers: structure, context, execution, and built‑in human oversight for workflows that rely on agents, including customer self‑service and regulatory reporting. That’s the tell. Autonomy alone is hard to buy. Governed autonomy is what gets funded.

Talkdesk is coming at the same problem from the contact center. Its CXA Operations Center is built around observability, evaluation, and live monitoring so AI and human agents can be managed as one workforce. That’s a cleaner mental model for CX leaders than “AI handles simple stuff.”

The simple stuff is not your risk. The risk is:

When you can’t see what the agent decided or why

When nobody owns the thresholds for escalation

When recovery takes three transfers and the customer repeating their story

The control room – the place where you see agent behavior, intervene, and repair – is becoming the real product. If you buy agents without that control room, you’re buying blind.

Operational takeaway: Treat “agent observability and oversight” as its own capability, budget line, and owner, not a byproduct of a vendor demo.

OPERATOR PLAYBOOK

Audit your hybrid service model before volume arrives

Pick one live journey where AI is already doing more than drafting – for example, password resets, appointment changes, billing questions, or order status.

For that one flow, answer four questions:

Authority: Where can the agent act without explicit human approval – and is that level of authority written down anywhere?

Observability: Where can a human reconstruct what the agent did, what it saw, and why it chose that path?

Escalation: Where does escalation happen only after confusion (multiple transfers, “agent not helpful” surveys) instead of before real risk (billing disputes, compliance exposure, churn‑likely events)?

Recovery: Where does recovery depend on the customer repeating context, because your systems don’t preserve the agent’s decisions and prior steps?

Then pressure‑test the handoffs:

Can a human jump into the journey mid‑stream, see the agent’s history, and fix the issue without forcing the customer to start over?

Do you have at least one metric or alert that would tell you, within a day, that this agent has started making bad decisions at scale?

Ask your team: If this agent is wrong for 1,000 customers this week, where do we see it first – and who is accountable for acting on that signal?

Signal: If you cannot trace the handoff and can’t name the owner of the fix, you don’t control the experience.

📈 Market Reality Check

The pressure is already here

Gartner reports that 91% of customer service leaders say they face pressure from executive leadership to implement AI in 2026. It also says nearly 80% of organizations plan to transition at least some agents into new roles, and 84% expect to add new skills to the agent role.

The metric shift follows. Hybrid teams make some of the old comfort metrics less useful on their own. Average handle time and containment don’t tell you whether AI made things better or just hid problems. You need to see:

how often humans are correcting agent decisions

how long it takes to detect a bad pattern

what happens to NPS or churn when an agent, not a person, is the first touch

Those are management metrics, not model metrics.

Pressure + weak oversight = expensive speed.

🧰 Tool Worth Knowing

Basecamp Agents

What it does: Basecamp has launched a technology preview that lets teams bring their own AI agents into Basecamp through an official CLI, agent skill, and SDK. Agents can write docs, manage to-dos, answer check-ins, and work through the terminal with tools like Claude, Codex, Cursor, and others.

CX use case: Useful for internal CX and journey teams that want agents to handle project hygiene, updates, follow-through, and status capture inside an operating system people already use.

Worth watching because: This is what happens when agent access moves out of the lab and into the tools where work actually gets managed. That can remove admin drag. It can also create quiet messes if permissioning, audit trails, and ownership stay fuzzy.

Bottom line: The interesting part is not the AI. It is that a work platform is preparing for agents to behave like teammates.

⚡ 90-Second CX Radar

When AI Called Every Pub in Ireland

An AI voice agent made more than 3,000 calls to Irish pubs asking the price of a Guinness, and only a handful of people realized they were speaking with a machine. The stunt is funny. The CX signal is not. Voice agents can now collect real-world commercial data at scale, which means local businesses are getting pulled into AI-mediated discovery whether they planned for it or not.

80% of Fortune 500 use active AI agents

Microsoft says more than 80% of Fortune 500 companies use active AI agents built with low-code or no-code tools, and 29% of employees have turned to unsanctioned AI agents for work tasks. That is a useful warning. Agent sprawl is going to hit customer-facing work faster than many governance teams expect.

🧭 Your Move

Pick one customer journey where AI is already doing more than drafting. Then map who owns the agent, who can see its actions, and who fixes the fallout when it gets something wrong.

That sounds less glamorous than another demo. It is also where trust, cost-to-serve, and service quality are actually won or lost.

The companies that manage AI best will look boring from the outside. That is usually a good sign.

Until tomorrow,

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.