AI Is Breaking Down in Returns, Refunds, and Cancellation

Plus: the leave journey is becoming a real test of consumer AI

Your daily signal on AI and CX — minus the hype.

📌 DCX Stat of the day: Nearly 1 in 5 consumers who used AI for customer service said they saw no benefit, a failure rate Qualtrics said was almost four times higher than AI use in general.

In this issue:

→ Exit journeys are now the stress test

→ Returns data shows the trust gap clearly

→ One playbook for pressure-testing leave flows

→ A fraud tool with a better instinct

🔎 Deep dive

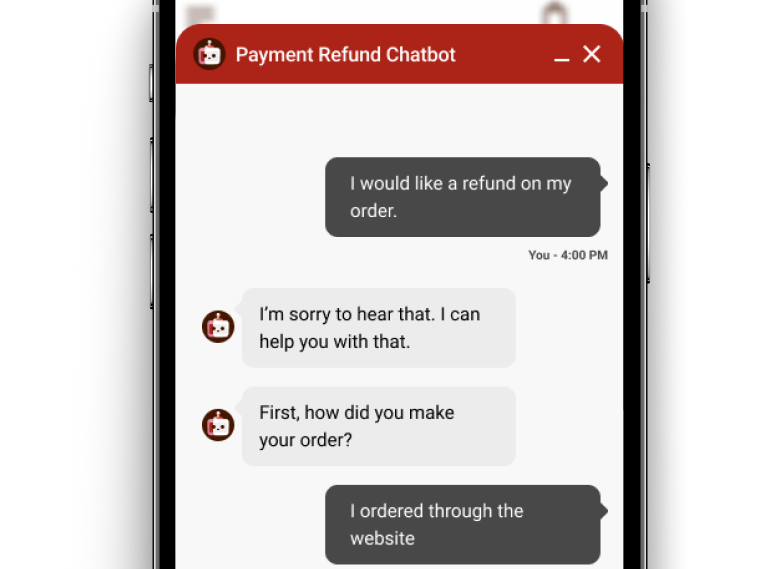

Refund chatbots are making a bad moment worse

AI can get away with a lot in low-stakes moments. A weak answer during product discovery is irritating. A weak answer when someone is trying to get their money back feels like stalling. That is a different kind of failure.

That is the risk showing up here. When a refund bot loops, deflects, or slows down access to a human, the customer does not experience that as innovation. They experience it as resistance. The bot becomes the company’s way of saying no without saying no.

That is why refund and complaint journeys deserve more scrutiny than the front end of the experience. If AI makes those moments feel harder, the savings story falls apart fast. You may cut contacts in the short term. You also raise the odds of repeat complaints, trust damage, and churn.

Consumer AI is starting to get judged at the point where the customer wants out. That is where weak service design gets exposed.

More: CNBC

OPERATOR PLAYBOOK

Audit one journey where the customer is trying to leave

Pick one exit-stage flow this week. Returns. Refunds. Cancellation. Billing reversal. Something with money, urgency, and elevated emotion.

Audit that flow for four things:

Can the customer see status, rules, and next steps clearly?

Can they reach a human quickly when the case involves money, timing, or exceptions?

Is fraud control targeted, or are you spreading suspicion across everyone?

Are you tracking true resolution, not just containment or deflection?

Then run the tougher test. What happens when the customer is angry, confused, or already on their second contact?

Ask your team: Where does AI reduce effort for the customer, and where does it mainly reduce effort for us?

Signal: If your AI makes exits feel harder, the savings story is probably overstated.

📈 Market Reality Check

Consumers will use AI in returns. They just will not forgive a bad experience.

Ada found that 55% of shoppers had already made or planned to make a return after the holiday season. It also found that 60% would be likely to use AI-powered customer service if it could instantly answer questions and process a return. That is the opening.

The warning sits right beside it. Only 36% said they were very satisfied with the returns process, and 57% said a poor returns experience would reduce their likelihood of buying from that brand again. Consumers are open to automation here. They are not giving brands a pass for making returns slower, colder, or harder to resolve.

AI acceptance + poor recovery = preventable churn

🧰 Tool Worth Knowing

Return Vision

What it does: Happy Returns, owned by UPS, offers an AI tool called Return Vision to flag suspicious returns for human audit.

CX use case: It gives retailers a way to tighten fraud review without turning the entire returns process into a hassle for everyone else.

Worth watching because: This is a smarter pattern. Apply scrutiny at the edges, not across the whole journey. That protects both trust and cost-to-serve better than blanket friction.

Bottom line: Returns AI gets more useful when it helps teams isolate bad actors without punishing good customers.

⚡ 90-Second CX Radar

Airbnb says a third of its customer support is now handled by AI in the U.S. and Canada

This matters because it puts a real consumer support brand at scale, with AI already handling roughly a third of support issues in North America. The useful CX question is not whether AI can take volume. It is what happens when those issues move from simple support into exceptions, disputes, credits, and recovery. That is where the leave-stage risk starts to show up.

NGL refund claims show how AI-adjacent deception can turn into a customer recovery problem

The FTC says NGL used fake messages to push users toward paid subscriptions and the earlier order halted deceptive claims around AI content moderation. Now the agency is running a refund claims process for users affected by unauthorized charges.

🧭 Your Move

The smartest next step is not another shiny pilot.

Pick one leave-stage journey where AI is already involved. Pressure-test it. Look at trust, cost-to-serve, repeat contact, and service quality together. That is where the real answer is.

Good AI in CX should make leaving feel clear, fair, and fast. Anything else will be felt as resistance.

Until tomorrow,

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.