Is your AI Strategy Improving CX or Just Making Friction Cheaper?

DCX Links April 12

Welcome to the DCX weekly roundup of customer experience insights!

AI is finally changing CX work in real ways—not just with faster content or cheaper tickets, but by pulling CX closer to the live operation of the business. Done well, that means spotting friction earlier, responding faster, and shaping outcomes before customers feel the pain.

There’s a catch: AI will scale whatever you point it at. Aim it at cost per contact and you risk industrializing bad experiences. Aim it at customer value—with clean data and clear guardrails—and you remove effort, improve satisfaction, and free teams to focus on the problems that genuinely need human judgment.

This week’s edition leans into that fork in the road. You’ll see:

What real‑time CX design looks like in practice.

How optimizing the wrong metric quietly erodes loyalty.

Where AI breaks down as a “researcher.”

Why agentic AI depends more on your data plumbing than your demos.

A support stat line and case study you can steal as a playbook.

My take: AI isn’t the problem. Most CX teams are just pointing it at the wrong outcomes.

The thread running through all of it: is your AI strategy improving customer experience—or just making friction cheaper?

Let’s dig in.

I’m obsessed with Wispr Flow Pro! Get a Free Month Now!

From rearview CX to real‑time design

Most CX teams weren’t built for real-time. They were built for post-mortems: long survey cycles, NPS readouts, churn analyses, and “what went wrong” decks. Emma Sopadjieva, Head of Customer Experience Strategy at Samsara, is arguing for something different — CX that sits much closer to the live operation of the business, where AI helps you see signals as they happen and adjust the experience before it turns into churn.

Why it matters

Instead of just digging through old data, CX teams can start acting like experience designers. Real-time signals, product telemetry, and cross-functional customer data mean you can spot friction while it’s forming and intervene before a customer opens a ticket or cancels.

The mindset move is from “data archaeology” to “experience architecture.” Less explaining what happened last quarter, more shaping what happens this afternoon.

What stands out

You get a picture of CX where the listening is mostly ambient. Click patterns, usage drops, support pings, even tone of voice all become early-warning signals for customer health. That’s very different from waiting on a quarterly survey readout.

The bottom line

The role of CX is expanding. The job isn’t just to measure the customer experience and report the score. It’s to help orchestrate that experience in something close to real time, with a tighter line to churn, retention, and revenue.

Try this: pick one critical journey (onboarding, renewal, or outage) and define what “real‑time CX” would actually mean—what signals you’d watch hourly, what thresholds would trigger action, and who owns those decisions today.

🔗 Go Deeper: CXO Outlook

When AI hits the wrong target, customers pay the price

Here’s the uncomfortable truth: AI will happily optimize whatever you tell it to, even if that goal quietly erodes your customer base.

Why it matters

A lot of leaders still treat each touchpoint like its own little optimization project. So you end up with a perfectly “efficient” IVR, strong deflection in chat, tight authentication rules, and a support script that hits all the checklist items. On paper, each piece looks great. In reality, the customer journey is miserable and loyalty tanks.

AI takes this pattern and speeds it up. If your north star is cost per contact, it will run hard at that – and may be incredibly effective at degrading long-term customer value.

What stands out

The Booking.com example is the contrast: they use AI to remove friction and add context, which drives both lower cost per reservation and higher satisfaction. That’s what happens when the goal is customer value, not just expense reduction.

The bottom line

Treat this like a guardrail for your AI roadmap. If your primary metric is efficiency, don’t be surprised when the machine gets efficient at damaging the relationship. The smarter move is to point AI at outcomes that matter over time: loyalty, quality of customers, and lifetime value—not just cheaper tickets. If your AI roadmap is obsessed with efficiency but vague on customer value, you don’t have a technology gap—you have a strategy gap.

Try this: list your current AI or automation initiatives and write down the primary success metric for each. If most of them are cost‑first, decide where you’ll shift to a customer‑value metric like retention, LTV, or high‑value behaviors.

🔗 Go Deeper: Bain & Company

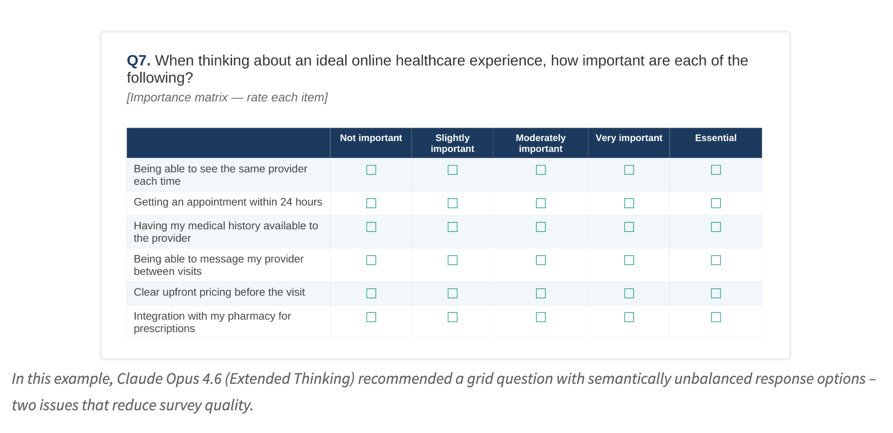

AI can draft your survey. It still can’t think like your best researcher.

If you’ve ever asked AI to draft a survey, you know it can spit out something that looks pretty polished in seconds. That’s exactly the risk.

Why it matters

AI is strong at the surface-level stuff: clear phrasing, logical sections, clean formatting. Where it struggles is the judgment calls that separate “fine” surveys from ones that produce reliable, decision-worthy data.

Those misses don’t always jump out. You only feel them later when your insights are fuzzy, contradictory, or weirdly easy to misread.

What stands out

AI still walks into the classic survey traps: too many questions, fatiguing grids, inconsistent scales, and demographic questions that show up too early.

The bottom line

For CX teams, the danger isn’t just bad surveys—it’s fake confidence. You end up with charts that look professional but rest on shaky design.

AI is a great drafting assistant. It is not your research brain. You still need someone who understands bias, respondent behavior, and data quality, plus basic steps like pilot testing and friction-aware design.

Try this: before launching your next AI‑drafted survey, run a five‑person pilot. Ask each person where they hesitated, guessed, or felt bored. Fix those friction points first, then worry about sample size.

🔗 Go Deeper: Nielsen Norman Group

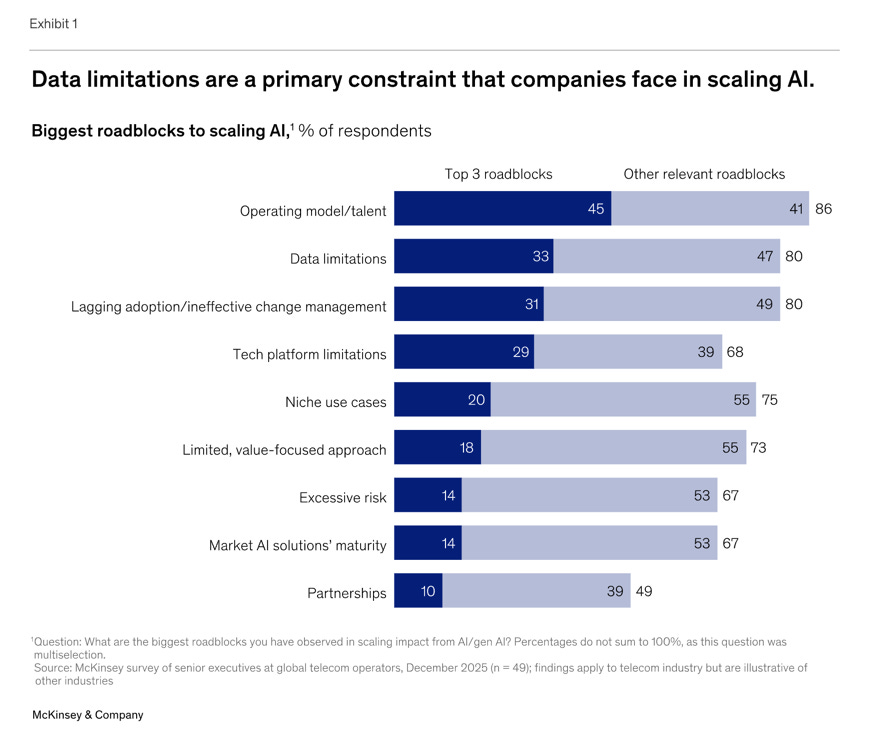

Agentic AI doesn’t scale on cool demos. It scales on clean data.

Everyone wants AI agents right now, but this piece throws a bucket of cold water on the hype: clever demos are easy; scaling real agents is brutally hard without solid data foundations.

Why it matters

The headline stat is telling: almost two-thirds of enterprises have tried agents, but fewer than 10% have scaled them to meaningful value. The blocker isn’t ambition or ideas—it’s the plumbing.

Agents live and die on the quality of the data they touch. If your systems are siloed, your lineage is fuzzy, and access controls are loose, those weaknesses show up fast once agents start taking actions instead of just summarizing.

What to do

The advice is refreshingly practical:

Start with a few high-impact workflows that are genuinely “agentable” instead of announcing a company-wide reinvention.

Invest in cleaner, more real-time customer data—where it lives, how it’s governed, and who can see what.

This is less about shiny AI features and more about the unglamorous work of data architecture and governance.

The bottom line

For CX leaders, this is a reminder that your agent strategy can’t be better than your data strategy. If your customer data is scattered and inconsistent, your agents will behave that way too. Putting sophisticated AI on top of broken systems doesn’t fix the underlying issues; it just exposes them faster.

Try this: identify three “agentable” workflows (for example, billing questions, order status, or appointment changes) and score each on data readiness: where the data lives, how clean it is, and whether permissions are clear. Start with the one that scores highest, not the one that sounds coolest.

🔗 Go Deeper: McKinsey

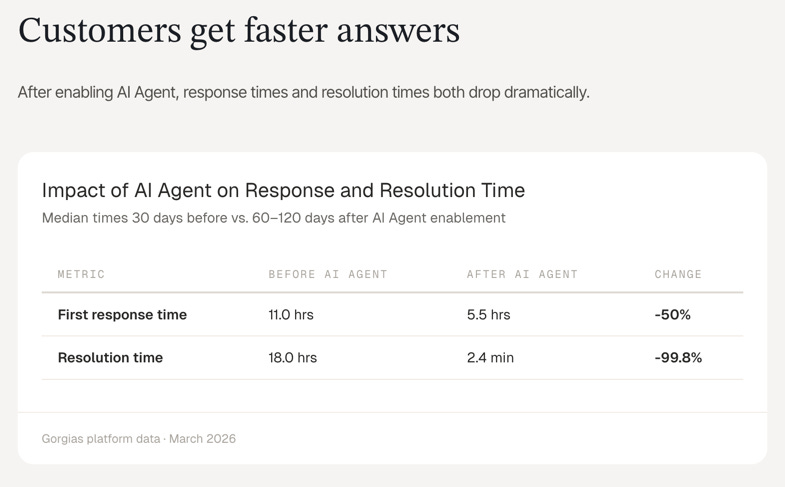

DCX Stat of the Week: Support costs more time than most brands realize

This dataset reframes support cost in a way most teams need to hear: the real currency is time.

Why it matters

Every ticket is a time sink—both for customers and agents. AI changes that equation quickly when it chews through the simple, repeatable stuff first, freeing humans for the work that actually requires judgment.

The numbers are pretty stark:

Brands using AI cut median first response time from 11 hours to 5.5 hours.

Median resolution time collapsed from 18 hours to 2.4 minutes.

That’s not a small tweak in experience; customers feel that difference immediately.

By the numbers

When automation passes the 50% mark, AI is doing the work of about 6.3 agents while the typical human team has 3 people on it.

At 60%+ automation, ticket volume explodes—up 143% year over year—while human hours only climb 6%. That’s what scaling support without scaling headcount looks like.

Even at the lower tiers of automation, the model still shows roughly $73K in estimated net annual savings after platform costs.

The bottom line

The real win isn’t just a cheaper support line. It’s the ability to handle growth without reflexively adding more people every time volume spikes. That opens up headroom to reassign humans to higher-value work and rethink what “great support” looks like when speed is almost instant

Try this: have your team estimate, in hours, how much customer time and how much agent time your top three ticket types consume each week. Use that as the lens to prioritize which flows to automate or redesign first.

🔗 Go Deeper: Gorgias

🔗 MORE STATS: Daily Stats on Substack Notes

📊 DCX Case Study of the Week: How SeatGeek doubled AI CSAT at 51.5% automation

SeatGeek’s story is a good example of disciplined AI adoption in support, not just turning on automation and hoping for the best.

CX Challenge

During big events, their support team was drowning in repetitive questions like “Where are my tickets?” Volume spiked, response times stretched, and escalations piled up. Classic high-growth, high-friction pattern.

Action Taken

SeatGeek rolled out Zendesk AI agents, but they started narrow: one high-volume, well-understood use case.

They leaned on a zero-training model to generate context-aware responses using their existing knowledge, launched first with authenticated users, then iterated based on how agents actually performed. Only after proving value did they expand to more scenarios.

Result

In just 4 months:

Automated resolution hit 51.5% (up 6 points).

AI CSAT jumped from 34% to 70%.

Tens of thousands of customers got instant help with fewer escalations hitting the human team.

Lesson for CX Pros

This is a playbook worth copying: pick one painful, high-volume issue, solve it with automation, validate that customers are satisfied, then expand. And keep humans in the loop—not just as escalations, but as coaches improving the AI over time.

Quote

“The zero-training model has been amazing… it feels very personalized.” — Whitney Thomas, SeatGeek

Try this: choose one repetitive “Where is my…?” issue in your own business, document the ideal end‑to‑end resolution, and then test an AI‑assisted flow on a small, authenticated segment before expanding. Make CSAT on those interactions your gate for scaling.

Further Reading: Zendesk case study

Have a case study to share? Reply and let me know!

If there’s a pattern across these stories, it’s this: AI amplifies whatever mindset you bring to customers. Aim small and short‑term, and it makes friction cheaper. Aim at long‑term value, and it makes better experiences possible at scale.

One question to leave with your team this week: where is your AI strategy genuinely improving the customer’s experience—and where is it just making friction cheaper?

See you next week.

✉️ Join 1,500+ CX leaders who actually use AI to grow loyalty and revenue.

Weekly, you’ll get human-centered insights, plug-and-play frameworks, and real CX case studies. All designed for CX pros who want to build with purpose—and prove impact to their C‑suite.

Subscribe today and get practical tools you can put in front of your team every week.