Your Customers are Asking AI for Money Help

Financial guidance is becoming conversational, which means your experience now needs stronger clarity, trust, and escalation design.

Your daily signal on AI and CX — minus the hype.

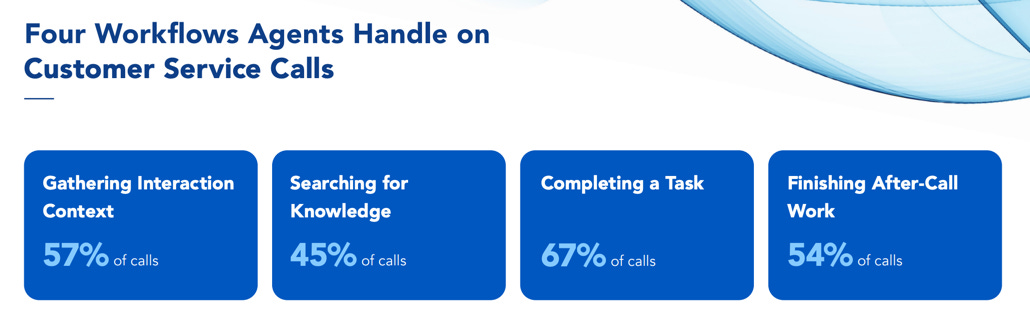

📌 DCX Stat of the day: 57% of customer calls require agents to gather context after an escalation. That is a journey memory problem. Your customer feels it as repetition. Your agent feels it as cleanup. The business feels it as cost. Verint

In this issue:

→ AI guidance moves deeper into personal finance

→ Financial journeys need stronger guardrails

→ Escalations still lose too much context

→ Dashboards are becoming conversations

→ Parents get more visibility into teen AI use

🔎 Deep dive

Customers are already using AI for financial decisions

EY says nearly half of global consumers have used AI to support savings and investment decisions in the past six months. That should get your attention because financial services has moved past the curiosity phase. Customers are already using AI to interpret money, risk, products, and next steps.

The CX issue is not whether customers will ask AI for help. They already are. The issue is whether your customer experience is ready when AI becomes part of the decision path.

EY also found that 21% of consumers have used AI agents for financial product recommendations, while 14% have allowed AI to select financial service providers on their behalf. That is the real operating shift. AI is moving from advice to selection. Once that happens, your clarity, data quality, policy language, and escalation paths become part of the customer’s decision environment.

For CX leaders, the risk is misplaced confidence. A customer may trust a confident AI recommendation faster than they trust your fine print. That puts pressure on your journey to explain options clearly, show limits, and create a clean human path when the decision has consequence.

📬 Copy-Paste Take

We should review any AI-assisted journey where the customer is making a meaningful decision. If the assistant cannot explain the answer, show its limits, preserve context, and hand off cleanly, we may be scaling a trust problem instead of improving the experience.

OPERATOR PLAYBOOK

Put guardrails around AI guidance moments

If your AI helps customers make decisions, treat that journey differently from a password reset or order status check.

Audit every AI-assisted guidance flow for four things:

The assistant explains the basis for its answer in plain language.

The customer can tell when the answer is general guidance, not a guaranteed outcome.

The customer can reach a person before frustration becomes the trigger.

The system keeps the conversation context when escalation happens.

Then test whether a customer can challenge the answer without starting over.

Ask your team: Where could our AI sound more certain than the business can responsibly support?

Signal: The risk in AI guidance is not only a wrong answer. It is a confident answer in a moment where the customer needed judgment.

📈 Market Reality Check

Context loss is still the tax customers pay

Verint’s 2026 agent experience research found that 57% of calls require agents to gather interaction context after an escalation.

That should bother you. Customers do not care that one system handed off to another system. They experience it as repetition, delay, and a pretty clear sign that the company was not really listening the first time.

If your AI strategy improves containment but does not improve memory, you are leaving the most painful part of the journey intact. Your customer may get to the right person faster and still feel like your company forgot them.

Lost context turns escalation into rework.

🧰 Tool Worth Knowing

CFO Silvia

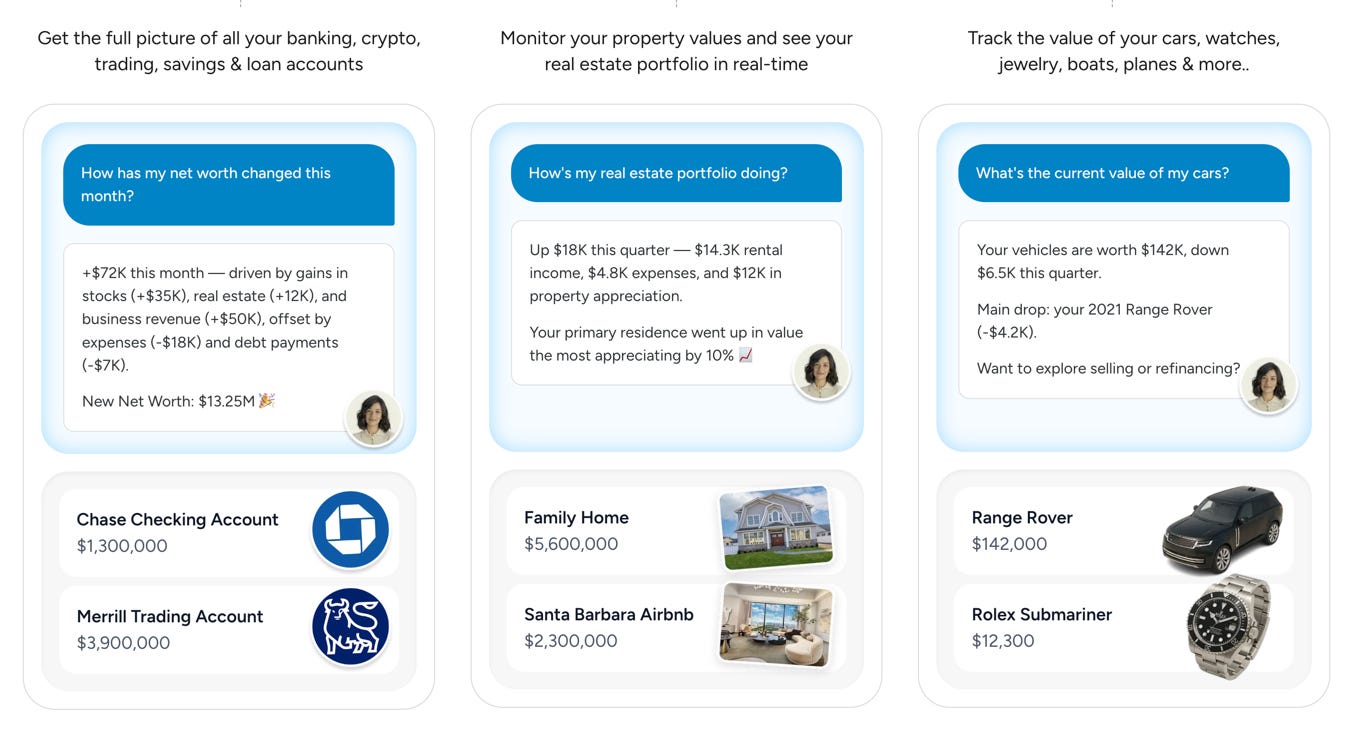

What it does: CFO Silvia is an AI personal finance assistant that connects bank accounts, crypto wallets, trading accounts, savings, loans, real estate, vehicles, and other assets into one financial dashboard. The product says it is used by 8,000+ investors tracking more than $15 billion in assets.

CX use case: You should study this because it turns personal finance from a reporting interface into a guidance conversation. The customer does not only see balances. They can ask what changed, where risk is building, what fees may be dragging performance, or how a scenario could affect their financial picture.

Worth watching because: Money is one of the highest-trust customer journeys. If AI guidance works here, your customers will expect similar clarity in insurance, telecom, healthcare, utilities, and every other category where decisions feel personal and consequences feel real.

Bottom line: CFO Silvia is a useful signal for CX teams: dashboards are being pulled into conversation, but the trust bar rises fast when the assistant touches financial decisions.

⚡ 90-Second CX Radar

Yelp is making its AI assistant more transactional

Yelp’s assistant is moving closer to recommendations, quotes, ordering, and booking. That turns local discovery into a guided action layer. If your brand depends on third-party discovery, the assistant’s view of your relevance becomes part of your customer journey.

Microsoft pushes Copilot deeper into commerce

Copilot Checkout and richer product catalog access point to a bigger shift: buying journeys are moving into AI-mediated environments. You will need cleaner product data, clearer policies, and fewer broken post-purchase handoffs because the assistant may become the shopper’s first filter.

Meta gives parents visibility into teen AI conversations

Meta is adding a supervision feature that lets parents see the topics their teens have discussed with Meta AI over the past seven days. The CX angle is trust design. When AI touches sensitive or family-adjacent journeys, your customers will expect visibility, boundaries, and control without needing a policy decoder ring.

🧭 Your Move

The shift in this issue is simple: AI is moving from answering basic questions to guiding customer decisions. That creates a higher bar. When the journey involves money, family, risk, service recovery, or a meaningful choice, your AI has to explain itself, show its limits, preserve context, and hand off cleanly.

Pick one AI-assisted journey where your customer is making a meaningful decision. Financial choice. Plan change. Upgrade. Cancellation. Claim. Care issue. Anything with consequence.

Now pressure-test it like a skeptical customer. Does the answer explain itself? Does it show uncertainty? Can the customer get to a person without performing frustration first? Does the handoff remember what already happened?

If one of those breaks, the experience may still look efficient on your dashboard while making the customer less confident.

AI guidance only works when the customer can understand the answer, question it, and get help without losing the thread.

Until tomorrow,

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now

and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.

If someone forwarded this to you, they thought you needed to see it before your next AI planning meeting. Get your own copy.