AI is Exposing the Conversations Your QA Process Never Saw

Plus: Support teams are moving from tiny samples to full-journey visibility. That sounds useful until the system starts naming the problems everyone has learned to work around.

Your daily signal on AI and CX — minus the hype.

📌 DCX Stat of the day: Traditional customer support QA often reviews only 1% to 3% of conversations, leaving most customer interactions unseen by the teams responsible for improving them.

Source: The Next Web

In this issue:

→ Solidroad brings AI into support QA

→ KPMG shows the adoption gap

→ BAND tackles agent-to-agent coordination

→ Khoros rethinks community as support infrastructure

→ Miravoice turns surveys into AI-led conversations

🔎 Deep dive

Solidroad is going after the QA blind spot

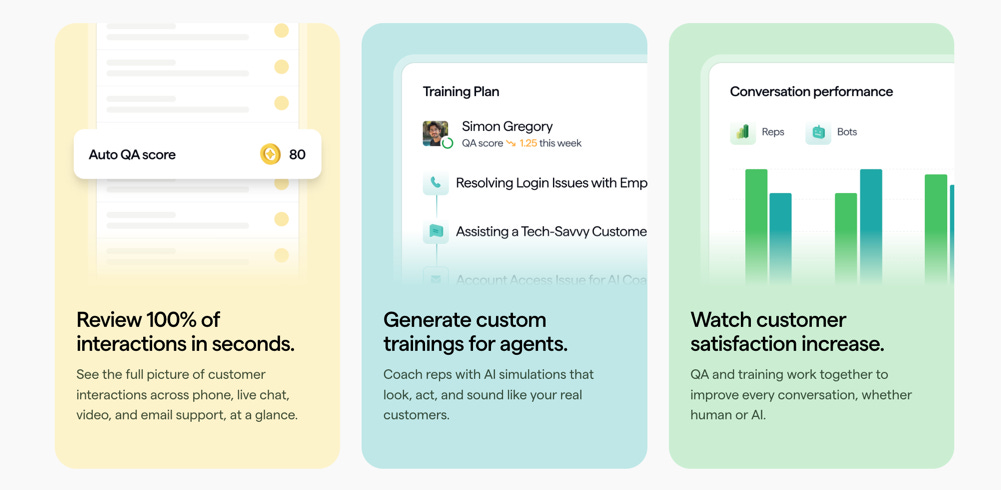

Solidroad raised $25 million to expand its AI quality assurance and training platform for customer support teams. The company says its platform evaluates 100% of customer interactions across human and AI agents, then turns those reviews into coaching and training simulations. Customers include Ryanair, ŌURA, ActiveCampaign, and Crypto.com.

That matters because most support leaders still manage quality through tiny samples, escalation noise, and the occasional “we should probably look into this” Slack thread. Not exactly a precision instrument.

The first impact shows up in chat, email, phone, QA, onboarding, and AI agent oversight. If every conversation can be reviewed, coaching gets more specific. Risk gets spotted earlier. Policy drift becomes visible. The tradeoff is simple: AI starts judging the work, so leaders need to define what good support actually looks like before the system starts scoring people, bots, and customer moments against a fuzzy standard.

Source: The Next Web

📬 Copy-Paste Take

Worth reading this issue of DCX AI Today. The useful point is that AI in support is moving past bots and into the operating layer: QA, coaching, escalation, and recovery. That creates a better path to consistency, but it also means CX leaders need clearer ownership for what happens when AI evaluates every customer conversation.

Read it here: DCX AI Today

OPERATOR PLAYBOOK

Pressure-test the conversations nobody reviews

AI support does not fail only when the bot gives a bad answer. It fails when no one notices the pattern fast enough.

Audit every support QA flow for four things:

Which interactions are currently reviewed

Which channels are barely sampled

Which AI-agent conversations get scored against customer outcomes

Which failure patterns trigger coaching, policy review, or journey fixes

Then test whether your QA program can catch a bad pattern before it becomes a repeat contact problem.

Ask your team: What customer frustration would we miss if we only reviewed the cleanest 2% of interactions?

Signal: Support quality is moving from sample review to full-conversation intelligence.

📈 Market Reality Check

Companies are funding AI before fixing the human adoption layer

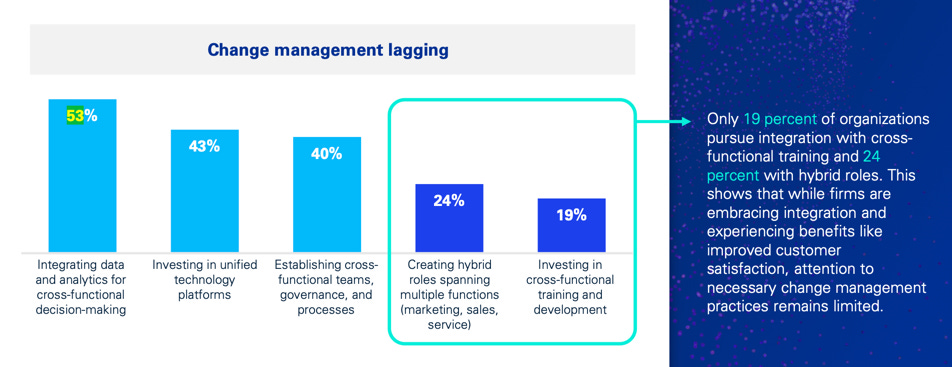

KPMG Customer Advisory Research Study found that 53% of organizations prioritize integrating data and analytics, 43% prioritize unified technology platforms, and 40% prioritize cross-functional teams, governance, and processes. But only 19% invest in cross-functional training and development.

That gap is where AI-supported CX quietly stalls. You can fund the data layer, platform layer, and governance layer, but if the people doing the work are not trained across functions, adoption becomes a handoff problem. Support sees one thing. Product hears another. Operations translates it differently. Then everyone wonders why the shiny new system did not change the customer experience.

System investment without human adoption = expensive friction.

🧰 Tool Worth Knowing

BAND

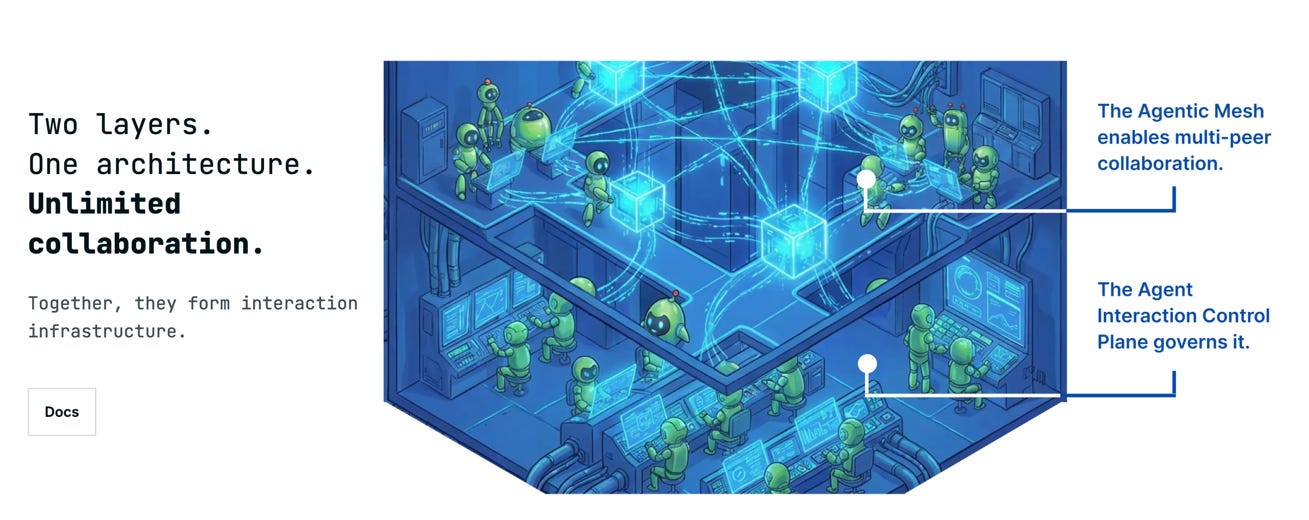

What it does: BAND.ai just came out of stealth with $17 million in seed funding to build an interaction layer for AI agents. Its pitch is that agents across different frameworks, clouds, teams, and organizations need a way to communicate and coordinate in real time.

CX use case: This gets interesting when service, billing, fulfillment, identity, fraud, sales, and retention agents need to work across systems without dropping context.

Worth watching because: Agent coordination sounds technical until billing, fulfillment, fraud, and support all give the customer different answers. Then it becomes a trust problem.

Bottom line: Early signal, not proven CX infrastructure yet. But the problem is real: agent sprawl will create handoff problems inside the business before customers ever see the shiny version.

NEW: The DCX AI Today - AI Tool Directory - If you lead a CX team and want a curated shortlist of tools worth evaluating, this is your starting point.

⚡ 90-Second CX Radar

Khoros turns community into cited AI support

Khoros launched Aurora AI, a reworked enterprise community platform where peer knowledge becomes cited answers and customer communities help with support, product feedback, and engagement. The question is whether the answer reflects lived customer knowledge or turns community wisdom into another sanitized support script.

Miravoice wants AI to run long-form phone research

Miravoice raised $6.3 million to build AI voice agents for structured phone surveys and interviews. More interviews may help teams hear from more customers, but volume is not understanding. The risk is mistaking a bigger research pipe for sharper customer judgment.

Octen is building search for AI agents

Octen launched with $10 million in seed funding to build a search engine designed specifically for AI agents. If AI agents become the first layer of discovery, your help content, product pages, and policies need to be legible to machines before a customer ever sees them.

🧭 Your Move

If AI is exposing the conversations your QA process never saw, your job is to make sure “quality” means more than speed, containment, and fewer tickets.

Pick one support journey this week and trace how quality is actually judged. What does the system reward? What does it miss? Where does coaching happen? Which failure patterns trigger action? And when the customer leaves the interaction annoyed but “resolved,” does anyone see that?

The next support advantage will come from knowing what happened, why it happened, and whether the customer would trust you again.

Until tomorrow,

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now

and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.

If someone forwarded this to you, they thought you needed to see it before your next AI planning meeting. Get your own copy.