Customers Are Giving AI a Shorter Leash

Plus: They’ll try faster service. They still expect memory, reversibility, and a human exit when the stakes rise.

Your daily signal on AI and CX — minus the hype.

📌 DCX Stat of the day: 83% of U.S. consumers say the brand should be held accountable when an AI-powered interaction goes wrong. 82% expect AI to meet the same standard as a human representative.

Takeaway: Customers are not separating the bot from the business. If the interaction fails, the brand owns the damage. 2026 Delight AI Index

In this issue:

→ WhatsApp gets serious as an AI service front door

→ Customers expect brands to own AI failures

→ Voice agents move into physical journeys

→ Privacy becomes part of the experience

🔎 Deep dive

WhatsApp is becoming an AI service front door

Genesys and Meta are pushing WhatsApp beyond messaging and into a fuller service environment: text, voice, proactive outreach, virtual agents, human agents, intent routing, and shared context inside Genesys Cloud. Genesys says the combined Genesys Cloud and WhatsApp capabilities already support about 420 million messages each month across more than 1,000 organizations. That is real operating volume.

The first place this shows up will be messy service moments. A billing question starts in text. The answer gets complicated. The customer wants a person. The company needs the context, consent, and prior promise to move with them.

The executive implication is clear: channel strategy now has to include memory, authority, escalation, and recovery. The customer consequence is lower repeat effort when it works. The business consequence is less cleanup work after broken handoffs. The hidden risk is assuming the integration fixes the operating model. It doesn’t. It only makes the gaps easier to notice.

📬 Copy-Paste Take: Send this to your COO

Customers will use AI and messaging when it saves time. They will blame us when the handoff, memory, or recovery path fails. Before we add more AI into service channels, we should audit where customers still have to repeat themselves.

OPERATOR PLAYBOOK

Audit the AI handoff before customers do it for you

Audit every AI-assisted service flow for four things:

Where the customer can reverse a decision or correct a bad answer

Whether conversation history survives the channel switch

Which actions require human approval

What happens when the customer says, “That’s not right”

Then test whether a customer can move from bot to human without re-authenticating, repeating context, or losing the prior promise.

Ask your team: Which AI action would create the biggest cleanup mess if it were wrong?

Signal: Customers may tolerate automation. They are far less patient with automation that traps them.

📈 Market Reality Check

Customers want AI with a receipt and an undo button

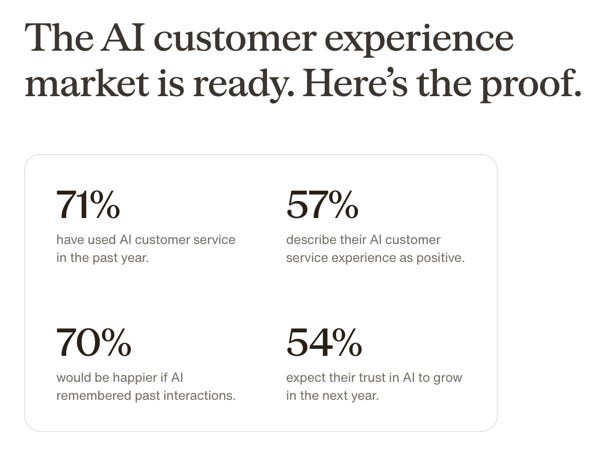

The Delight AI 2026 Index says 71% of consumers have used AI customer service in the past year, but only 57% describe the experience as positive. That is the gap CX leaders should care about. Usage is already here. Confidence is still conditional.

The sharper signal: 70% would be happier if AI remembered past interactions, and 57% trust AI more when they can undo what it did. Delight also says consumers rank the ability to reverse mistakes as the top trust builder, ahead of accuracy and transparency. That should make every CX team pause. Customers are not asking for AI to sound more polished. They want control when it acts.

AI adoption without memory + reversibility = faster frustration.

🧰 Tool Worth Knowing

SoundHound OASYS

What it does: OASYS is SoundHound’s new agentic AI platform for building, managing, evaluating, and improving conversational AI agents across phones, web chat, kiosks, social media, in-car systems, TVs, drive-thrus, and other customer-facing channels. SoundHound says the platform can ingest documentation and transcripts, visualize transaction flows, and coordinate multiple agents inside one interaction.

CX use case: This fits service moments that do not live neatly inside a contact center. Appointment scheduling. Retail orders. Insurance claims. Payments. Prescription refills. Drive-thru ordering. In-car commerce. The customer does not care which system owns the moment. They care whether the answer is correct and the action can be trusted.

Worth watching because: AI support is moving into physical and voice-heavy journeys. That raises the bar for context, consent, escalation, and auditability.

Bottom line: OASYS extends the AI conversation beyond the support console and into real customer environments. The caution is obvious: “self-learning” sounds powerful, but CX leaders should ask for deployment evidence, failure handling, and human approval rules before trusting the platform with sensitive customer actions.

NEW: The DCX AI Today - AI Tool Directory - If you lead a CX team and want a curated shortlist of tools worth evaluating, this is your starting point.

⚡ 90-Second CX Radar

Privacy is becoming part of the service promise

Vogue Business reports that only 1% of surveyed Vogue and GQ readers found AI shopping recommendations entirely useful, while 24% trusted AI recommendations and summaries. For retail and luxury teams, privacy is moving from legal language to customer signal. If personalization feels grabby, the experience starts with suspicion.

Meta is reportedly building a more capable personal assistant

Reuters, citing the Financial Times, reports Meta is developing a more personalized agentic assistant for everyday tasks and testing it internally. For CX leaders, the question is whether more customer decisions begin inside assistants your brand does not control. That changes discovery, comparison, service, and recovery.

Anthropic takes vertical AI deeper into finance

Anthropic launched 10 finance-focused agents for tasks such as pitchbooks, audits, and credit memos. This sits upstream from customer experience, but it matters. In regulated industries, faster internal work only helps customers if the policy, approval, and audit trail stay clean.

🧭 Your Move

Pick one AI-assisted service journey and identify the moment when the customer loses control.

Not where the bot answers slowly. Not where the UI is clunky. The moment where the customer cannot correct, reverse, escalate, or prove what was promised.

That is where trust gets expensive.

The AI experience is only as strong as the customer’s way out when it goes wrong.

Until tomorrow,

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now

and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.

If someone forwarded this to you, they thought you needed to see it before your next AI planning meeting. Get your own copy.