The Chatbot Era Was the Easy Part

Plus: Agents are now being pointed at core workflows. That means CX leaders need rules for action, not just better answers.

Your daily signal on AI and CX — minus the hype.

Join us next Tuesday - Just 5 spots left

Limited Space Available - Sign up now

📌 DCX Stat of the day: 84.9% of consumers prefer a human agent over an AI agent for customer service. Even if they were assured their issue would be resolved either way, 80.1% still prefer a human. Metrigy

In this issue:

→ Banking gets a governed agent layer

→ Customers still want human assurance

→ Claims AI moves into first notice of loss

→ Travel AI tests policy-safe assistance

→ Sales AI learns from bad conversations

🔎 Deep dive

Banking AI just moved closer to the core

Fiserv launched agentOS, an agentic AI operating system for banks and credit unions. The useful part is not the agent label. Everyone has that now. The signal is where the agents sit: core banking, payments, issuer processing, and servicing.

That changes the CX conversation. In banking, customer journeys depend on trust, permission, auditability, and clean execution. If an AI agent touches loan onboarding, fraud, disputes, deposits, or servicing, it is no longer just answering questions. It is moving through workflows where mistakes carry financial, regulatory, and relationship risk.

The first customer impact will show up in high-stakes service moments: account issues, loan status, fraud flags, payment disputes, and servicing follow-up. The business upside is faster execution with fewer manual gaps. The hidden risk is pretending a governed platform fixes unclear policy, weak data, or messy exception handling.

📬 Copy-Paste Take

AI agents are moving from the edge of service into the operating core. That means CX, risk, compliance, ops, and IT need one shared answer to a hard question: what can the agent actually do when the customer’s money, identity, or trust is on the line?

OPERATOR PLAYBOOK

Draw the permission map before the agent goes live

The next agent risk is not only bad answers. It is unclear authority.

Audit every AI-assisted service flow for four things:

Which actions the agent can take without approval

Which decisions require human review

What evidence gets logged for audit or dispute review

How the customer recovers when the agent gets stuck

Then test whether a customer can move from AI to human support without losing context, restarting the request, or waiting for a second team to interpret what happened.

Ask your team: Where are we giving AI workflow access before we have decision rules the business actually trusts?

Signal: The more an agent can do, the more important its boundaries become.

📈 Market Reality Check

Production is exposing the weak spots

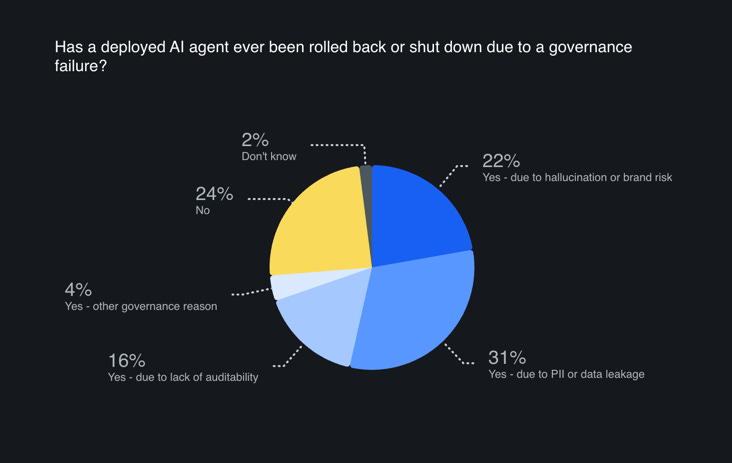

Sinch’s AI Production Paradox report found 74% of enterprises have already rolled back or shut down a live AI customer communications agent after deployment because of a governance failure.

That is the part worth sitting with. The market has moved past “can we launch this?” and into “can we run this safely when real customers behave like real customers?” The recovery cost shows up in repeat contacts, escalations, compliance review, customer distrust, and employees forced to explain why the shiny new thing failed.

Agent value = task completion minus cleanup cost.

🧰 Tool Worth Knowing

insured.io Claims AI

What it does: insured.io’s Claims AI is a conversational AI agent for insurance carriers. It handles First Notice of Loss across voice and chat, searches policy information, and submits claims into insurer systems.

CX use case: This is built for one of the most emotionally loaded service moments: filing a claim. A customer may be dealing with damage, loss, confusion, and urgency. The intake experience has to be fast, clear, and easy to recover from if something goes sideways.

Worth watching because: Claims is where AI can either reduce stress or create a bigger mess. The workflow needs policy context, system access, language support, and a clean path to a person.

Bottom line: This is a better AI use case than generic deflection. It is aimed at a specific journey, a specific job, and a moment where speed and clarity actually matter.

⚡ 90-Second CX Radar

FCM Travel reworks Sam as an AI layer across the travel journey

FCM says Sam will support travelers, arrangers, and travel managers across nearly 100 countries starting in June. The strongest CX signal is not the assistant itself. It is the claim that policy rules, supplier preferences, approvals, and handoffs to consultants are built into the experience.

BCG trains an AI sales agent on what not to copy

BCG is training its customer-facing AI agent, Jamie, on strong and weak sales behaviors from customer conversations. That is a useful shift. AI training should not only model the best performer. It should also learn which behaviors create confusion, pressure, or mistrust.

Parloa and SAP connect service agents to business process

Parloa and SAP are tying customer-facing AI agents into SAP Service Cloud and business data. The customer benefit only shows up if the agent can move from conversation to resolution. Otherwise, it is another polite front door attached to the same old back-office wait.

🧭 Your Move

If your company is building AI agents, stop reviewing them like tools and start reviewing them like operating participants.

Ask what they can decide. Ask where they can act. Ask who owns the decision rules. Ask what happens when the customer is angry, confused, vulnerable, or financially exposed.

The agent is not the strategy. The strategy is the operating model around it.

Until Monday,

NEW: The DCX AI Today - AI Tool Directory - If you lead a CX team and want a curated shortlist of tools worth evaluating, this is your starting point.

👥 Share This Issue

Think of one person who’s wrestling with AI in CX right now

and forward this to them.

I’m obsessed with Wispr Flow Pro! Get a Free Month on me.

If someone forwarded this to you, they thought you needed to see it before your next AI planning meeting. Get your own copy.